So, you’re planning a web scraping project and don’t know where to start? Or you have a rough idea but don’t know how to choose the right scraping tool?

Either way, I’ll help you out – in this post, I’ll go over how to start a web scraping project and choose the right proxy type for your scraping projects. I’ll also cover the pros and cons of in-house web scrapers to help you decide whether building one is worth it or if it’s better to just buy one. Let’s get into it.

Web scraping project ideas

I think the best way to start working on your web scraping project is to get a general idea of what it’s for. Below are some cases that businesses use web scraping for, but if you’d rather watch a video, here’s one that covers use cases pretty well.

Market research

SEO monitoring

Price monitoring

Review monitoring

Brand protection

Travel fare aggregation

Planning a project on web scraping: where to start?

Alright, so you’re planning a web scraping project. Whether you have a business or you need some info personally, you should decide what sort of data you’ll need to extract. That can be anything: pricing data, SERP data from search engines, etc. For the sake of an example, let’s say you need the latter – SERP data for SEO monitoring. What’s next?

Then, proxy servers will gather your required data – your tool should be able to go about it without reaching implemented requests limit and slip under anti-scraping measures.

Before jumping to look for a proxy provider, first, you need to know how much data you’ll be needing. In other words – how many requests you’ll be making per day, etc. Based on data points (or request volumes) and traffic you’ll be needing, it will be easier for you to choose the right proxy type for the job.

But what if you’re not sure how many requests you’ll be making and what traffic you’ll be generating on your web scraping project? Well, there are a few solutions for this issue: you can get a trial of any web scraping tool. Or you can choose a tool that doesn’t require you to know the exact numbers and allows you just to do the job you need.

Once you have the numbers or at least have a rough idea of what targets you need to scrape, you’ll find it a lot easier to choose the right tool.

Choosing the right proxy type for web scraping projects

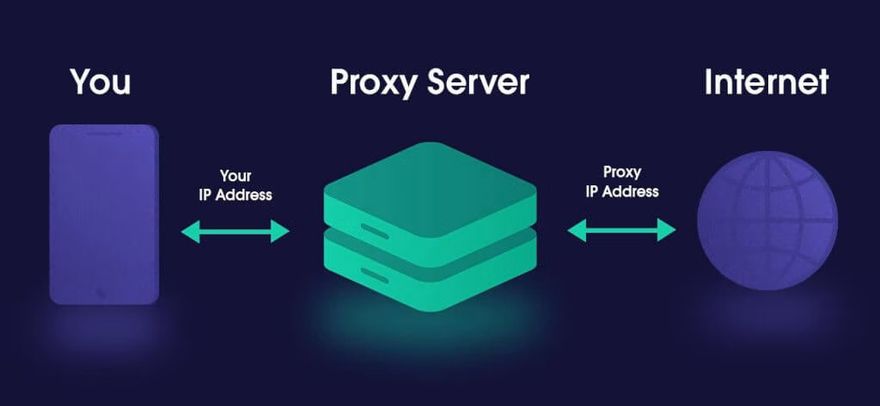

Okay, so there are two main proxy types used for scraping – datacenter and residential – and you’ll have to choose which one to use for your project. There’s a lot of misconception going around that residential proxies are the best as they provide ultimate anonymity. In fact, all proxies provide anonymity online – that’s sort of their purpose. The type of proxy you need to buy actually depends solely on what web scraping project you’ll be doing.

If you need a proxy for, let’s say, market research – a datacenter proxy will be more than enough for you. Actually, you might even go for semi-dedicated proxies. They’re fast, stable, and, most of all – a lot cheaper than residential proxies.

However, if you want to scrape more challenging targets, i.e., data for sales intelligence – a residential proxy will be a better choice. Most websites can detect they’re being scraped, and getting blocked on such websites is a lot more likely. With residential proxies, however, it’ll be harder to get blocked since they look like real IPs.

TL;DR: here’s a table of possible use cases and best proxy solutions for each one:

Market research

Brand protection

Email protection

Travel fare aggregation

Ad verification

Let’s talk a bit more about three other use cases. These include the earlier-mentioned projects based on web scraping like sales intelligence, SEO monitoring, and product page intelligence. Even though you can use proxies for these particular use cases, you’ll find yourself struggling with one of the most common bottlenecks found in web scraping. It’s time. Or not enough of it.

On that note, let’s jump into another topic – the pros and cons of using in-house web scrapers with proxies and see whether using speeds up things.

Pros and cons of in-house web scrapers

Okay, so there are two approaches to web scraping: maintaining and working with an in-house web scraper or outsourcing a web scraper from third-party providers.

Let’s take a closer look at the pros and cons of in-house web scraping to help you decide which way to go.

Pros of in-house web scraping projects

More control

Having an in-house solution for your web scraping project ideas gives you full control over the process. You can customize the scraper to suit your needs better. Thus, if you’re an experienced developer, you’re better equipped to build one for yourself.

Faster setup speed

Getting an in-house web scraper up and running can be a faster process than outsourcing from third-party providers. An in-house team may better understand your requirements and set up the web scraper faster.

Quicker resolution of issues

With a third-party web scraping tool, you’ll have to raise a support ticket and wait for some time before the issue gets attended to. Meanwhile, if you run into an issue, as a developer, you can get to fixing it right away.

Cons of in-house web scraping projects

Higher cost

Setting up an in-house web scraper can be quite expensive. Server costs, proxy costs, as well as maintenance costs can add up pretty quickly. If you’re not a developer, you’ll also need their help, which means additional costs.

Maintenance challenges

Maintaining an in-house web scraping setup can be a real challenge. Servers need to be kept in optimal conditions, and the web scraping program must be constantly updated to keep up with changes to the websites being scraped.

Associated risks

There are certain legal risks associated with web scraping if not done properly. Many websites often place restrictions on web scraping activity. A third-party provider with an experienced team of developers will be better able to follow the best practices to scrape websites safely. If you’re a developer yourself, make sure to think it through or even seek legal advice.

Conclusion

I hope this article has helped with your web scraping project planning and answered proxy-related questions a bit more thoroughly. I wish you the best of luck with your project!

Top comments (1)

If you have any questions, please leave a comment and we will make sure to answer as quickly as possible! :)