Modern trusted proxy websites perform different functions related to gathering web data including buying residential and mobile proxies for cybersecurity reasons. During its processing on the enterprise level information takes the readable form, suitable for further manual and AI-based analysis. It is called Business Intelligence.

Dexodata provides rotating proxies in Saudi Arabia, Greece, etc. for free trial and serves them as a core ingredient of data parsing. Another integral component is a software shell formed by the source code. Python has already become the most popular computer language for data processing, and is going to strengthen its position in 2023. Let’s take a look at the most interesting trends of its implementation.

What is Python?

Python is an interpreted language, which unlike compiled analogues suits better for computing-intensive data obtaining with best datacenter proxies. Its latest version, 3.11.0 is up to 60 percent faster than the preceding iteration, according to its developers. This version also holds a number of distinct features, such as:

- Clear and extensive error messages.

- Built-in TOML (Tom’s Obvious Minimal Language) files parser.

- WebAssembly native support.

- Updated syntax for two and more exceptions taken into account simultaneously.

- Literal string types acceptance.

- Variadic Generics with several types stored at once for delayed assignment to objects, etc.

Where is Python applied?

Python holds the 4th place in the average list of the most popular interpreters, and the 3rd, when it comes to beginners choice, according to the Stackoverflow 2022 survey. Later we will name the reasons why Python is prominent among data extracting experts. As well as Dexodata is a trusted proxy website to get access from Japan, Sweden or other locations. The most promising spheres for Python in 2023 are supposed to be:

- Cloud storages

- Game development

- Academical learning example

- Big data processing

- Web pages and mobile apps creating

- Machine learning, neural networks and AI

- Programming languages integration

- Networks administration

- Web scraping.

Why use Python for data extraction?

Automation is the main purpose, simply put. The characteristics listed below have made it popular as a scraping tool associated with AI-based data acquiring models. Python automates the process of data gathering and distribution through paid and free trial rotating proxies successfully because of:

1. Concise and simple code

Python resembles common English, while the data collection from <div> tags can be performed in twenty code lines or so. Ask for the best datacenter proxies to change IP during the work.

2. Appropriate speed

The last version has an average speed improvement of x1.22, according to developers. Despite the fact code still operates slower than C++ due to compilation, it is enough to gather information.

3. Easy to learn syntax without “{}”, semicolons, etc

Due to accepted indentation of the product users can easily distinct code blocks and scopes.

4. Number of ready-to-go solutions for collecting and processing data

BeautifulSoup and Selenium parsing is applicable to almost anything, while a large number of other modules (pandas, Matlplotlib) simplify the analysis.

5. High compatibility

The parser itself transfers and receives requests from other packages with minimal delay.

6. Dynamic character

Python allows to work with variables when needed, without defying all the datatypes.

7. Anonymous functions (lambda)

They make scripts capable of owning two and more variables simultaneously.

8. Friendly community

According to Stackoverflow, Python has been the 3rd most popular interpreting solution among scholars for three years in a row, so there are a lot of guides, use cases and articles to solve the possible problem.

9. Multitasking

One coding approach is used for main and secondary parsing missions at the same time. E.g. to buy residential proxies and mobile ones in Vietnam, Ireland, Korea or other places. Then set them up via API, change IP during data mining, etc. Also it saves files, creates and fulfills databases, operates expressions and strings, etc.

10. Versatility

You are free to choose both dynamic and static sites as a target with appropriate libraries.

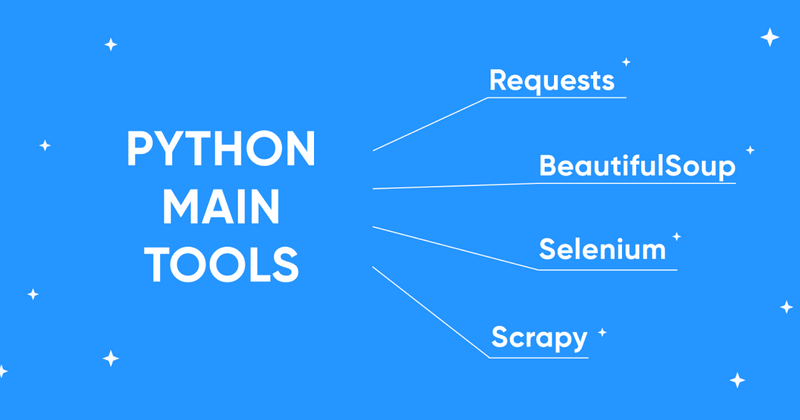

What are the Python main tools?

Python is convenient to be used with just libraries you need according to the specifics of purposes. In a similar manner clients prefer to buy residential and mobile proxies or datacenter IPs from Dexodata only depending on one’s needs. As the purpose is to obtain some info from the Web, we’ll name only the involved modules with their characteristics.

**1. Requests Module

- BeautifulSoup

- Selenium

- Scrapy**

These are the mostly leveraged libraries and modules

Requests module, responsible for sending HTTP requests, interacting with proxies and headers via API,etc. HTTPS-compatible default module has a wider range of features than built-in urllib3.

BeautifulSoup, the main HTML data gathering library, is capable of pulling the data out of HTML and XML files. bs4 forms a parse tree on the base of page source code and is capable of harvesting data in a readable form by your choice. BeautifulSoup has limited capabilities in accessing the info from dynamic HTML, but that is what Selenium is great for.

Selenium, 4.8.0 (current) version of module to parse dynamic HTML and AJAX. Known for outstanding automation possibilities via webdriver within the chosen browser. Built-in tool, Selenium Grid is IPv6-compatible via HTTPS, the IP type our trusted proxy website also provides.

Scrapy, the web-crawler carrying a wide range of customizable features. It is capable of collecting information from several pages at once with AutoThrottle adjustment to maximize the process speed. The last 2.7.1 version has its own JSON decoder and can add hints about object types to simplify the database reading.

What future awaits Python in 2023?

Python became the second most popular primary coding method among the GitHub community. Every fifth developer has expressed the interest to work with Python-based projects. That is what official Octoverse by GitHub statistics says. And its role in software development is expected to grow.

Two curious predictions about the Python role in 2023 can be made on the basis of the Finances Online review.

- The first applies to the growing Analytics-as-a-Service market (AaaS). Financial enterprises seem to abandon Big data obtaining and its management in favor of third-party analytics.

- AaaS experts configure data obtaining solutions according to the needs of a particular customer. Python suits great for such works due to simple syntaxis and flexible modules. We advise to buy in advance residential and mobile proxies or best datacenter proxies to proceed parsing with minimum failures.

The second optimistic trend for Python in 2023 applies to the rising market share of machine learning algorithms. This codebase holds the first place as the most popular programming language among machine learning for the third year in a row, according to the Octoverse report. And the trend is increasing.

What is the role of AI-based solutions in Python web developing?

Machine learning starts from obtaining terabytes of web data that needs to be obtained and processed. Among the projects operating this way are:

- Large language models (LLMs), such as GPT-3 (ChatGPT, etc.)

- Generative AI neural networks, such as Midjourney and Dall-E,

- Enterprise artificial neural networks (ANN) to predict customer behavior, automate marketing, etc.

Dexodata team sees no obstacles for Python to become the main software development tool in all mentioned above market spheres.

ANNs also learn to proceed data collecting on their own using the Deep Learning mechanisms. Now AI mostly manages the logistics and warehouse goods accounting, but the tendency is to entrust AI-driven robots harvesting scientific and financial data for both descriptive and predictive business intelligence. Dexodata is an enterprise-level infrastructure serving IP addresses in Greece, Vietnam, Ireland, Sweden, Korea, Saudi Arabia, Japan and 100+ countries. We have proxies for parsing needs and offer rotating proxies for a free trial.

Top comments (0)